Centos8系统发布已有一段时间,不少小伙伴开始上手使用。kubernetes1.18也发布了,今天作者使用kubeadm在Centos8系统上部署kubernetes。

1 系统准备

查看系统版本

[root@localhost]# cat /etc/centos-release

CentOS Linux release 8.1.1911 (Core)配置网络

[root@localhost ~]# cat /etc/sysconfig/network-scripts/ifcfg-enp0s3

TYPE=Ethernet

PROXY_METHOD=none

BROWSER_ONLY=no

BOOTPROTO=static

DEFROUTE=yes

IPV4_FAILURE_FATAL=no

IPV6INIT=yes

IPV6_AUTOCONF=yes

IPV6_DEFROUTE=yes

IPV6_FAILURE_FATAL=no

IPV6_ADDR_GEN_MODE=stable-privacy

NAME=enp0s3

UUID=039303a5-c70d-4973-8c91-97eaa071c23d

DEVICE=enp0s3

ONBOOT=yes

IPADDR=192.168.122.21

NETMASK=255.255.255.0

GATEWAY=192.168.122.1

DNS1=223.5.5.5添加阿里源

[root@localhost ~]# rm -rfv /etc/yum.repos.d/*

[root@localhost ~]# curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-8.repo配置主机名

[root@master01 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.122.21 master01.paas.com master01关闭swap,注释swap分区

[root@master01 ~]# swapoff -a

[root@master01 ~]# cat /etc/fstab

#

# /etc/fstab

# Created by anaconda on Tue Mar 31 22:44:34 2020

#

# Accessible filesystems, by reference, are maintained under '/dev/disk/'.

# See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info.

#

# After editing this file, run 'systemctl daemon-reload' to update systemd

# units generated from this file.

#

/dev/mapper/cl-root / xfs defaults 0 0

UUID=5fecb240-379b-4331-ba04-f41338e81a6e /boot ext4 defaults 1 2

/dev/mapper/cl-home /home xfs defaults 0 0

#/dev/mapper/cl-swap swap swap defaults 0 0配置内核参数,将桥接的IPv4流量传递到iptables的链

[root@master01 ~]# cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system2 安装常用包

[root@master01 ~]# yum install vim bash-completion net-tools gcc -y3 使用aliyun源安装docker-ce

[root@master01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

[root@master01 ~]# yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@master01 ~]# yum -y install docker-ce安装docker-ce如果出现以下错

[root@master01 ~]# yum -y install docker-ce

CentOS-8 - Base - mirrors.aliyun.com 14 kB/s | 3.8 kB 00:00

CentOS-8 - Extras - mirrors.aliyun.com 6.4 kB/s | 1.5 kB 00:00

CentOS-8 - AppStream - mirrors.aliyun.com 16 kB/s | 4.3 kB 00:00

Docker CE Stable - x86_64 40 kB/s | 22 kB 00:00

Error:

Problem: package docker-ce-3:19.03.8-3.el7.x86_64 requires containerd.io >= 1.2.2-3, but none of the providers can be installed

- cannot install the best candidate for the job

- package containerd.io-1.2.10-3.2.el7.x86_64 is excluded

- package containerd.io-1.2.13-3.1.el7.x86_64 is excluded

- package containerd.io-1.2.2-3.3.el7.x86_64 is excluded

- package containerd.io-1.2.2-3.el7.x86_64 is excluded

- package containerd.io-1.2.4-3.1.el7.x86_64 is excluded

- package containerd.io-1.2.5-3.1.el7.x86_64 is excluded

- package containerd.io-1.2.6-3.3.el7.x86_64 is excluded

(try to add '--skip-broken' to skip uninstallable packages or '--nobest' to use not only best candidate packages)解决方法

[root@master01 ~]# wget https://download.docker.com/linux/centos/7/x86_64/edge/Packages/containerd.io-1.2.6-3.3.el7.x86_64.rpm

[root@master01 ~]# yum install containerd.io-1.2.6-3.3.el7.x86_64.rpm然后再安装docker-ce即可成功

添加aliyundocker仓库加速器

[root@master01 ~]# mkdir -p /etc/docker

[root@master01 ~]# tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://fl791z1h.mirror.aliyuncs.com"]

}

EOF

[root@master01 ~]# systemctl daemon-reload

[root@master01 ~]# systemctl restart docker4 安装kubectl、kubelet、kubeadm

添加阿里kubernetes源

[root@master01 ~]# cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF安装

[root@master01 ~]# yum install kubectl kubelet kubeadm

[root@master01 ~]# systemctl enable kubelet5 初始化k8s集群

[root@master01 ~]# kubeadm init --kubernetes-version=1.18.0 \

--apiserver-advertise-address=192.168.122.21 \

--image-repository registry.aliyuncs.com/google_containers \

--service-cidr=10.10.0.0/16 --pod-network-cidr=10.122.0.0/16POD的网段为: 10.122.0.0/16, api server地址就是master本机IP。

这一步很关键,由于kubeadm 默认从官网k8s.grc.io下载所需镜像,国内无法访问,因此需要通过–image-repository指定阿里云镜像仓库地址。

集群初始化成功后返回如下信息:

W0408 09:36:36.121603 14098 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.18.0

[preflight] Running pre-flight checks

[WARNING FileExisting-tc]: tc not found in system path

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [master01.paas.com kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.10.0.1 192.168.122.21]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [master01.paas.com localhost] and IPs [192.168.122.21 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [master01.paas.com localhost] and IPs [192.168.122.21 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W0408 09:36:43.343191 14098 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W0408 09:36:43.344303 14098 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 23.002541 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master01.paas.com as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node master01.paas.com as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: v2r5a4.veazy2xhzetpktfz

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.122.21:6443 --token v2r5a4.veazy2xhzetpktfz \

--discovery-token-ca-cert-hash sha256:daded8514c8350f7c238204979039ff9884d5b595ca950ba8bbce80724fd65d4

[root@master01 ~]#记录生成的最后部分内容,此内容需要在其它节点加入Kubernetes集群时执行。

根据提示创建kubectl

[root@master01 ~]# mkdir -p $HOME/.kube

[root@master01 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@master01 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config执行下面命令,使kubectl可以自动补充

[root@master01 ~]# source <(kubectl completion bash)查看节点,pod

[root@master01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

master01.paas.com NotReady master 2m29s v1.18.0

[root@master01 ~]# kubectl get pod --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-7ff77c879f-fsj9l 0/1 Pending 0 2m12s

kube-system coredns-7ff77c879f-q5ll2 0/1 Pending 0 2m12s

kube-system etcd-master01.paas.com 1/1 Running 0 2m22s

kube-system kube-apiserver-master01.paas.com 1/1 Running 0 2m22s

kube-system kube-controller-manager-master01.paas.com 1/1 Running 0 2m22s

kube-system kube-proxy-th472 1/1 Running 0 2m12s

kube-system kube-scheduler-master01.paas.com 1/1 Running 0 2m22s

[root@master01 ~]#node节点为NotReady,因为corednspod没有启动,缺少网络pod

6 安装calico网络

[root@master01 ~]# kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

serviceaccount/calico-node created

deployment.apps/calico-kube-controllers created

serviceaccount/calico-kube-controllers created查看pod和node

[root@master01 ~]# kubectl get pod --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-kube-controllers-555fc8cc5c-k8rbk 1/1 Running 0 36s

kube-system calico-node-5km27 1/1 Running 0 36s

kube-system coredns-7ff77c879f-fsj9l 1/1 Running 0 5m22s

kube-system coredns-7ff77c879f-q5ll2 1/1 Running 0 5m22s

kube-system etcd-master01.paas.com 1/1 Running 0 5m32s

kube-system kube-apiserver-master01.paas.com 1/1 Running 0 5m32s

kube-system kube-controller-manager-master01.paas.com 1/1 Running 0 5m32s

kube-system kube-proxy-th472 1/1 Running 0 5m22s

kube-system kube-scheduler-master01.paas.com 1/1 Running 0 5m32s

[root@master01 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

master01.paas.com Ready master 5m47s v1.18.0

[root@master01 ~]#此时集群状态正常

7 安装kubernetes-dashboard

官方部署dashboard的服务没使用nodeport,将yaml文件下载到本地,在service里添加nodeport

[root@master01 ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

[root@master01 ~]# vim recommended.yaml

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

[root@master01 ~]# kubectl create -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created查看pod,service

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-dc6947fbf-869kf 1/1 Running 0 37s

kubernetes-dashboard-5d4dc8b976-sdxxt 1/1 Running 0 37s

[root@master01 ~]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.10.58.93 <none> 8000/TCP 44s

kubernetes-dashboard NodePort 10.10.132.66 <none> 443:30000/TCP 44s

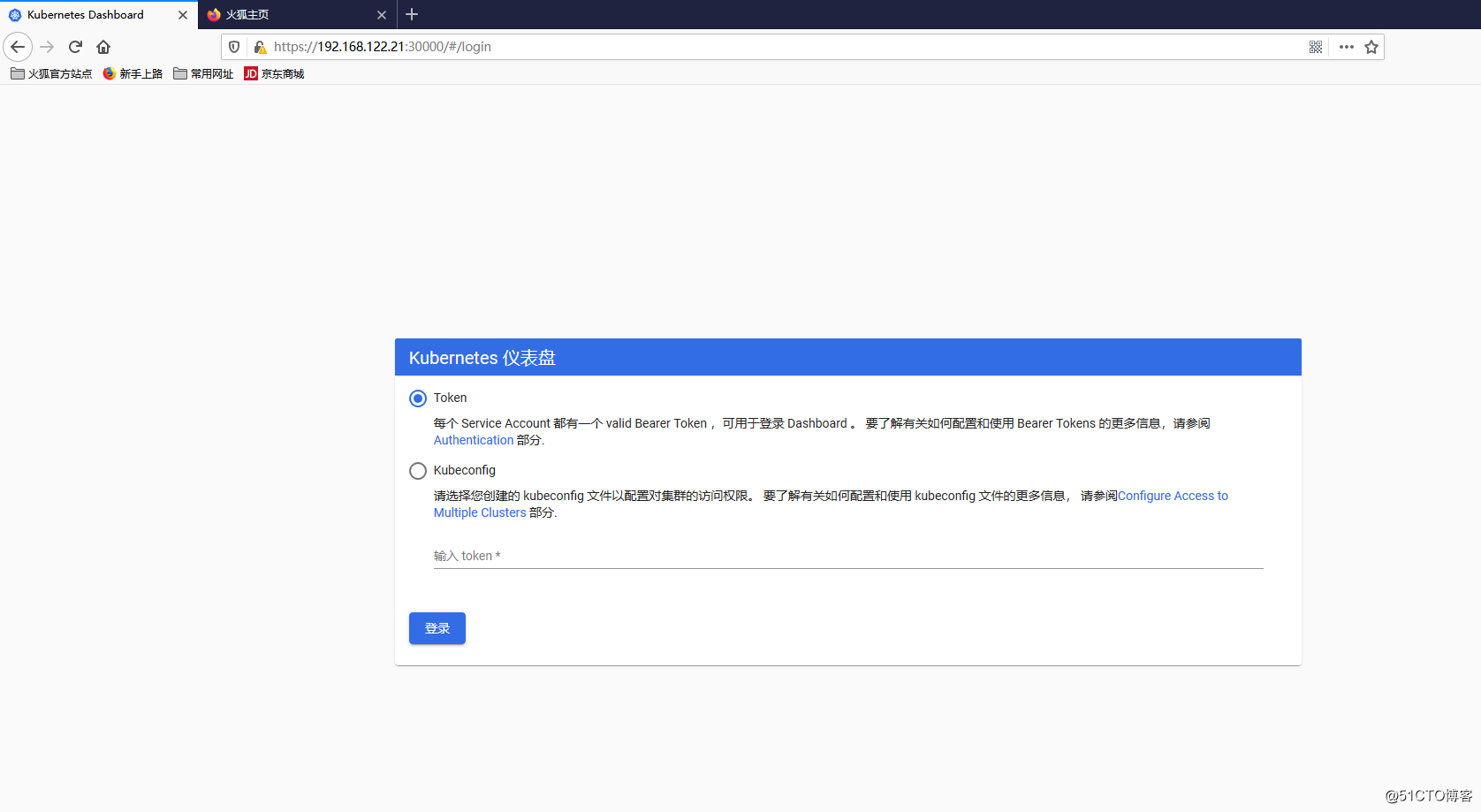

[root@master01 ~]#通过页面访问,推荐使用firefox浏览器

使用token进行登录,执行下面命令获取token

[root@master01 ~]# kubectl describe secrets -n kubernetes-dashboard kubernetes-dashboard-token-t4hxz | grep token | awk 'NR==3{print $2}'

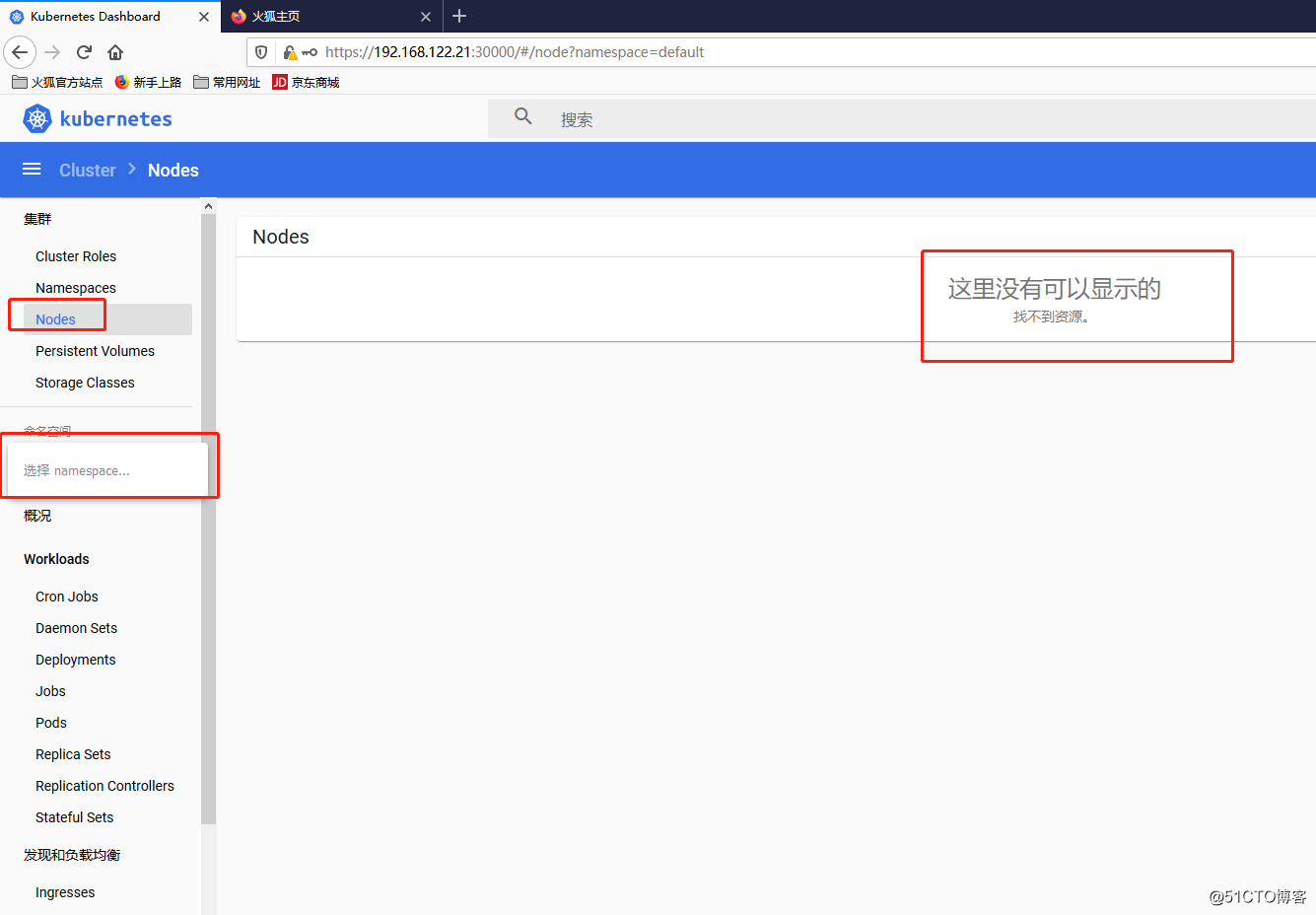

eyJhbGciOiJSUzI1NiIsImtpZCI6IlhJaDgyTWEzZ3FtWE9hTnJqUHN1akdHZU1pRHN3QWM2RUlQbUVOT0g0Qm8ifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi10NGh4eiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImUxOWYwMGI5LTI3MWItNDY5OS1hMjI3LTAzZWEyZTllMDE4YiIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.Mcw9zYSbTfhaYV38vlEaI0CSomYLtb05F2AIGpyT_PjIN8xmRdnIQhWGANBDuuDjdxScSXHOytHAKdj3pzBFVw_lfU5PseBg6hmdv_EPFPh2GvRd9XCs0TE5CVX8qfHkAGKc-DltA7jPwt5VqIFjnolLLGXB-exhiU73YMG_Xy9dZE-u0KKCvSq7XZDR87P_X30JYCAZXDlxcv8iOsuI4I-wlacm6LRF6HgyJqctJNVyE7seVVIgLqetAtt9LicTo6BBozbefHeK6zqRYeITU8AHhe-PLS4xo2fey5up77v4vyPHy_SEnKOtZcBzje1XKNPolGfiXItLYF7u95m9_A登录后如下展示,如果没有namespace可选,并且提示找不到资源 ,那么就是权限问题

通过查看dashboard日志,得到如下 信息

[root@master01 ~]# kubectl logs -f -n kubernetes-dashboard kubernetes-dashboard-5d4dc8b976-sdxxt

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: namespaces is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "namespaces" in API group "" at the cluster scope

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/cronjob/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 Getting list of all cron jobs in the cluster

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: cronjobs.batch is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "cronjobs" in API group "batch" in the namespace "default"

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 POST /api/v1/token/refresh request from 192.168.122.21:7788: { contents hidden }

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/daemonset/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/deployment/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: daemonsets.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "daemonsets" in API group "apps" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/csrftoken/token request from 192.168.122.21:7788:

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 Getting list of all deployments in the cluster

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: deployments.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "deployments" in API group "apps" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: replicasets.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "replicasets" in API group "apps" in the namespace "default"

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/job/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/pod/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 Getting list of all jobs in the cluster

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: jobs.batch is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "jobs" in API group "batch" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 Getting list of all pods in the cluster

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"

2020/04/08 01:54:31 Getting pod metrics

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/replicaset/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 Getting list of all replica sets in the cluster

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Incoming HTTP/2.0 GET /api/v1/replicationcontroller/default?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: replicasets.apps is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "replicasets" in API group "apps" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: pods is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "pods" in API group "" in the namespace "default"

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: events is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "events" in API group "" in the namespace "default"

2020/04/08 01:54:31 [2020-04-08T01:54:31Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 01:54:31 Getting list of all replication controllers in the cluster

2020/04/08 01:54:31 Non-critical error occurred during resource retrieval: replicationcontrollers is forbidden: User "system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard" cannot list resource "replicationcontrollers" in API group "" in the namespace "default"解决方法

[root@master01 ~]# kubectl create clusterrolebinding serviceaccount-cluster-admin --clusterrole=cluster-admin --group=system:serviceaccount

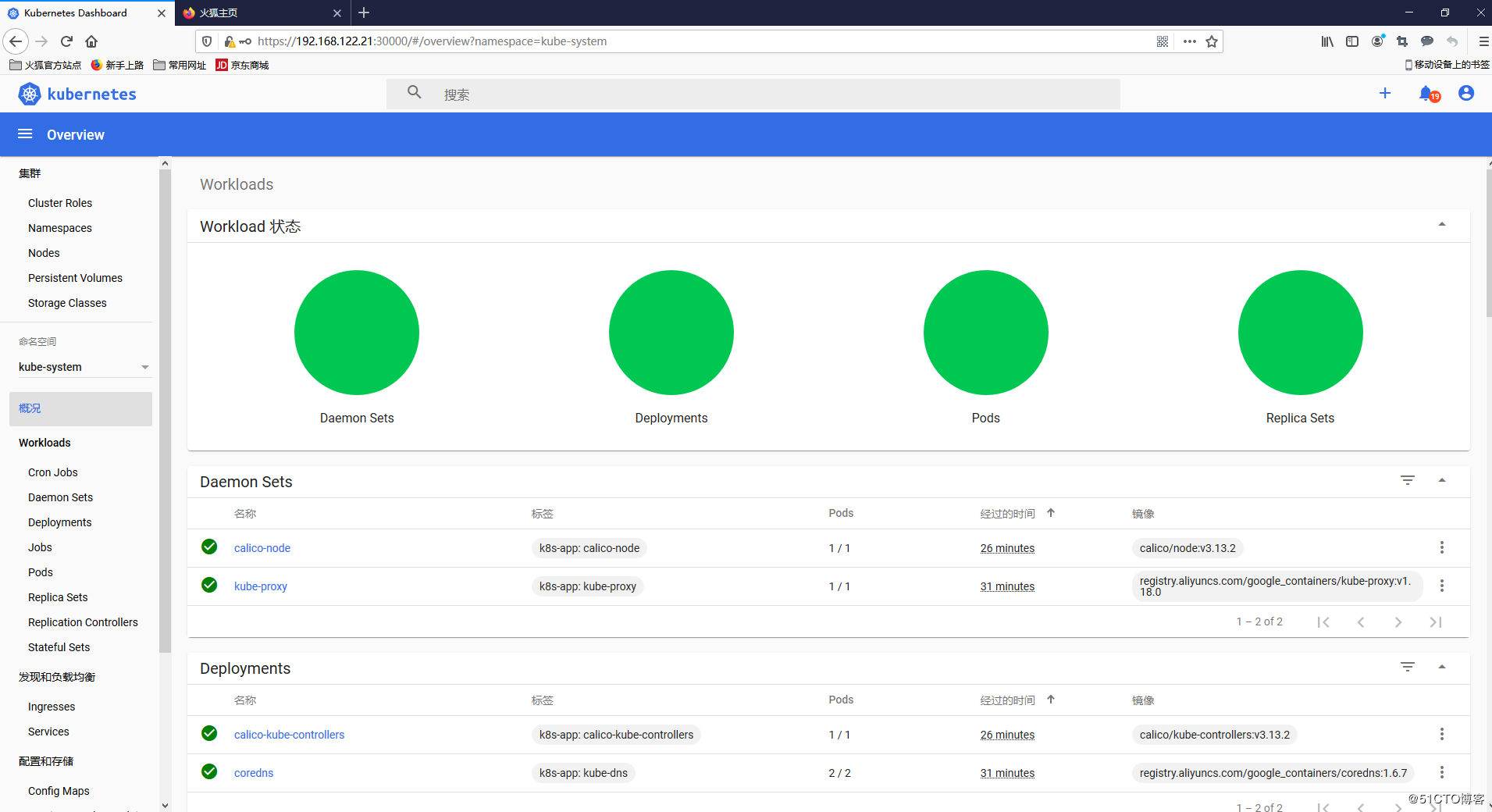

clusterrolebinding.rbac.authorization.k8s.io/serviceaccount-cluster-admin created查看dashboard日志

[root@master01 ~]# kubectl logs -f -n kubernetes-dashboard kubernetes-dashboard-5d4dc8b976-sdxx

2020/04/08 02:07:03 Getting list of namespaces

2020/04/08 02:07:03 [2020-04-08T02:07:03Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788:

2020/04/08 02:07:08 Getting list of namespaces

2020/04/08 02:07:08 [2020-04-08T02:07:08Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788:

2020/04/08 02:07:13 Getting list of namespaces

2020/04/08 02:07:13 [2020-04-08T02:07:13Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788:

2020/04/08 02:07:18 Getting list of namespaces

2020/04/08 02:07:18 [2020-04-08T02:07:18Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788:

2020/04/08 02:07:23 Getting list of namespaces

2020/04/08 02:07:23 [2020-04-08T02:07:23Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Incoming HTTP/2.0 GET /api/v1/namespace request from 192.168.122.21:7788:

2020/04/08 02:07:28 Getting list of namespaces

2020/04/08 02:07:28 [2020-04-08T02:07:28Z] Outcoming response to 192.168.122.21:7788 with 200 status code

2020/04/08 02:07:33 [2020-04-08T02:07:33Z] Incoming HTTP/2.0 GET /api/v1/node?itemsPerPage=10&page=1&sortBy=d,creationTimestamp request from 192.168.122.21:7788:

2020/04/08 02:07:33 [2020-04-08T02:07:33Z] Outcoming response to 192.168.122.21:7788 with 200 status code此时再查看dashboard,即可看到有资源展示

Kubernetes中文社区

Kubernetes中文社区

[preflight] You can also perform this action

到了这步 一直停留在哪里

你使用的是什么镜像仓库源?

我在第二步‘安装常用包’的时候,一只报错:“Error: Failed to download metadata for repo ‘base’”,请问怎么解决?

可以先用yum makecache测试一下yum源是否可用。机器是否可以访问外网?

我通过您的方法测试了源,报以下错误:

[rui@master01 ~]$ yum makecache

CentOS-8 – Base – mirrors.aliyun.com 6.1 kB/s | 2.2 MB 06:10

Failed to download metadata for repo ‘base’

Error: Failed to download metadata for repo ‘base’

机器是课哟访问外网的

yum repolist

可以了,安装成功,感谢您

[root@master01 ~]# kubeadm token create

W0416 04:33:13.596384 24812 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

d95rgn.feum3u558ifxzitm

我遇到dashboard的报错和你这个不太一样

Non-critical error occurred during resource retrieval: namespaces is forbidden: User “system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard” cannot list resource “namespaces” in API group “” at the cluster scope

这也是权限问题

这个权限问题如何解决呢

kubectl create clusterrolebinding serviceaccount-cluster-admin –clusterrole=cluster-admin –user=system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard

完美解决:添加用户绑定权限

error: exactly one NAME is required, got 3

See ‘kubectl create clusterrolebinding -h’ for help and examples

我用你的命令出现这个。。。。。。

–user 注意参数之间的空格

–user 的user前为两个短横杠

我执行了这个命令,也无法查询出Namespaces,是怎么回事

后来怎么解决的

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory “/etc/kubernetes/manifests”. This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

Unfortunately, an error has occurred:

timed out waiting for the condition

This error is likely caused by:

– The kubelet is not running

– The kubelet is unhealthy due to a misconfiguration of the node in some way (required cgroups disabled)

If you are on a systemd-powered system, you can try to troubleshoot the error with the following commands:

– ‘systemctl status kubelet’

– ‘journalctl -xeu kubelet’

Additionally, a control plane component may have crashed or exited when started by the container runtime.

To troubleshoot, list all containers using your preferred container runtimes CLI.

Here is one example how you may list all Kubernetes containers running in docker:

– ‘docker ps -a | grep kube | grep -v pause’

Once you have found the failing container, you can inspect its logs with:

– ‘docker logs CONTAINERID’

error execution phase wait-control-plane: couldn’t initialize a Kubernetes cluster

To see the stack trace of this error execute with –v=5 or higher

kube-system coredns-78d4cf999f-26znp 0/1 Pending 0 3m

kube-system coredns-78d4cf999f-jmq8x 0/1 Pending 0 3m

安装网络还是Pending

要看calico-pod有没有启动成功

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

–2020-05-08 07:15:50– https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

正在解析主机 raw.githubusercontent.com (raw.githubusercontent.com)… 0.0.0.0, ::

正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|0.0.0.0|:443… 失败:拒绝连接。

正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|::|:443… 失败:拒绝连接。

这是什么原因啊

网络问题

wget -k https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

忽略ssl效验即可

请问这个地址是一样吗? –service-cidr=10.10.0.0/16 –pod-network-cidr=10.122.0.0/16

这个地址可以换哦。只要不主机网络地址冲突就行

安装完成,虽然踩了不少坑,但是很顺利。这篇文章漏了一个比较重要的小模块,可能因为快写完文章了,高兴的偷工减料吧

kubectl -n kubernetes-dashboard get secret 查看凭证

非常感谢提醒

原来如此,我还在奇怪kubernetes-dashboard-token-t4hxz这个值是哪里来的orz

感谢提醒

您好,我浏览器打开dashboard的时候,一只是:Client sent an HTTP request to an HTTPS server.请问这是什么原因?

你换浏览器试试

没问题了,谢谢您,是需要将http换成https。

请问下通过kubeadm安装的k8s集群如何启用HPA功能呢

请问重启服务器后 如何启动k8s相关组件

一般只要docker、kubelet服务支持开机自启动就可以了,enable

谢谢

kubernetes-dashboard角色授权 –user才对,我的1.18.2

kubectl create clusterrolebinding serviceaccount-cluster-admin –clusterrole=cluster-admin –user=system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard

namespaces is forbidden: User “system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard” cannot list resource “namespaces” in API group “” at the cluster scope

这个权限问题你们是怎么解决的啊?

secrets is forbidden: User “system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard” cannot list resource “secrets” in API group “” in the namespace “default”

最后权限不足的地方不管输哪个都报错

storageclasses.storage.k8s.io is forbidden: User “system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard” cannot list resource “storageclasses” in API group “storage.k8s.io” at the cluster scope

kubectl delete clusterrolebinding serviceaccount-cluster-admin 清理旧提权

kubectl create clusterrolebinding serviceaccount-cluster-admin –clusterrole=cluster-admin –user=system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard

可以了 thanks very much

我想问下你shell的配色怎么做的

什么意思?shell工具配色?这个是平台编辑器自动生成的

kubectl create -f recommended.yaml 命令

Error from server (NotFound): error when creating “recommended.yaml”: namespaces “kubernetes-dashboard” not found

这是什么问题 小白一个 是”kubernetes-dashboard”这个东西的镜像没找到?

我也是这样的,请问解决了吗

怎么解决,我也碰到这个问题了,目前还没找到解决方案!急死了!

我也是这样的,请问解决了吗

无法访问此网站192.168.56.105 的响应时间过长。

https://192.168.56.105:30000/

我安装成功后,用上面地址访问,不行; 求知道,还需要那个操作才行?

重启服务器后,好了

能不能加一步如何创建以3个master的高可用

你好,安装很顺利,能否出一个3个master的高可用的方案。

kubeadm join 192.168.2.201:6443 –token s7ztyn.92m8ldaal0f41nov –discovery-token-ca-cert-hash sha256:7b6c0b714820cbc997cce08e71502b08380528d7cf5afd94732ccd1aafdf8212 –v=5

添加node节点有个报错,请问怎么解决呢,如下:

I0622 21:56:31.166767 7915 token.go:215] [discovery] Failed to request cluster-info, will try again: Get https://192.168.2.201:6443/api/v1/namespaces/kube-public/configmaps/cluster-info?timeout=10s: x509: certificate has expired or is not yet valid

时区问题解决了

如何更新证书呢,添加节点的时候会报错

I0622 21:56:31.166767 7915 token.go:215] [discovery] Failed to request cluster-info, will try again: Get https://192.168.2.201:6443/api/v1/namespaces/kube-public/configmaps/cluster-info?timeout=10s: x509: certificate has expired or is not yet valid

时区问题解决了

老大,麻烦问一下。界面能出来但是里边是空白的,命名空间为空,怎么解决?

请问我安装完后一切都正常,但是POD之间无法互相访问,请问是不是在安装calico时要指定API与ETCD等参数啊?

谢谢

镜像怎么提前下载呢?内网环境没网啊

你好,安装顺利,但是执行kubectl get cs 提示

scheduler Unhealthy Get http://127.0.0.1:10251/healthz: dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get http://127.0.0.1:10252/healthz: dial tcp 127.0.0.1:10252: connect: connection refused

请问如何处理?,谢谢

使用token登陆报错,Unauthorized (401): Invalid credentials provided,这个怎么解决啊

这个文件写得不全,如何把node加到master 没写

Error from server (NotFound): error when creating “recommended.yaml”: namespaces “kubernetes-dashboard” not found

[root@localhost ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

–2020-08-31 22:53:52– https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

正在解析主机 raw.githubusercontent.com (raw.githubusercontent.com)… 0.0.0.0, ::

正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|0.0.0.0|:443… 失败:Connection refused。

正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|::|:443… 失败:Connection refused。

这是什么原因啊?

1.19 应该是 kubectl create clusterrolebinding serviceaccount-cluster-admin –clusterrole=cluster-admin –user=system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard

kubeadm init 时apiserver-advertise-address 记得替换成自己的ip地址。如果使用vm安装,中间使用过挂起,那么需要手动执行nmctl n on 开启网络

集群初始化完成后,无法找到kubectl命令[root@master ~]# source <(kubectl completion bash)

-bash: kubectl: command not found

这个是怎么回事。

我的初始化命令是kubeadm init –kubernetes-version=1.19.3 \

–apiserver-advertise-address=192.168.2.126 \

–image-repository registry.aliyuncs.com/google_containers \

–service-cidr=10.10.0.0/16 –pod-network-cidr=10.122.0.0/16

按照您的步骤。

kubeadm init –kubernetes-version=1.18.0 –apiserver-advertise-address=192.168.5.110 –image-repository registry.aliyuncs.com/google_containers –service-cidr=10.10.0.0/16 –pod-network-cidr=10.122.0.0/16

这里就开始报错了。

[ERROR KubeletVersion]: the kubelet version is higher than the control plane version. This is not a supported version skew and may lead to a malfunctional cluster. Kubelet version: “1.20.2” Control plane version: “20.10.0”