什么是Kubernetes

Kubernetes(通常称为k8s)是一个开源的容器编排系统,旨在提供一个简单而有效的平台,用于跨主机集群自动部署,扩展和操作应用程序容器。

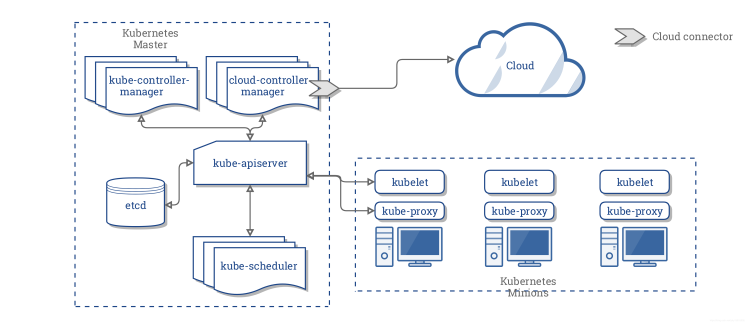

Kubernetes在架构上拥有一系列组件,从而实现了一种应用程序部署,维护和扩展的机制。

这些组件被设计为松散耦合和可伸缩的,以便它们可以满足各种工作负载。

Kubernetes系统的可扩展性很大程度上由Kubernetes API提供,该API可以用作可扩展的内部组件。

Kubernetes主要包含以下核心组件:

- etcd 用作所有集群数据的存储

- apiserver 提供资源操作的入口,并提供身份验证,授权,访问控制,API注册和发现的机制

- controller manager 负责维护集群的状态,例如故障检测,自动扩展,滚动更新等。

- scheduler 负责调度资源,并根据预定的调度策略将Pod调度到相应的节点。

- kubelet 负责维护容器的生命周期,还负责管理存储卷和网络

- Container runtime 负责镜像管理以及Pod和容器(CRI)的运行时

- kube-proxy 负责为kubernetes-service提供集群中的服务发现和负载均衡

除了核心组件之外,还有一些不错的组件:

- kube-dns 负责为整个集群提供DNS服务

- Ingress Controller 提供服务的外部网络访问

- Heapster 提供资源监控

- Dashboard 提供GUI图形化界面

- Federation 提供跨可用区的集群管理

- Fluentd-elasticsearch 提供集群日志收集,存储和查询

Kubernetes和数据库

数据库容器化是最近的热门话题,Kubernetes可以为数据库带来什么好处?

- 故障恢复:Kubernetes失败时将重新启动数据库应用程序,或将数据库迁移到集群中的其他运行状况正常的节点

- 存储管理:Kubernetes提供了各种存储管理解决方案,以便数据库可以采用不同的存储系统

- 负载均衡:Kubernetes Service通过将外部网络流量分配给不同的数据库副本,来提供负载均衡

- 横向可扩展性:Kubernetes可以根据当前集群的资源利用率来扩缩容,从而提高资源利用率

当前,许多数据库(例如MySQL,MongoDB和TiDB)都可以在Kubernetes上正常工作。

Kubernetes上的Nebula Graph

Nebula Graph是一个分布式的开源图形数据库,由图形化(查询引擎),存储(数据存储)和metad(元数据)组成。Kubernetes为Nebula Graph带来以下好处:

- Kubernetes调整了graphd,metad和storaged 的不同副本之间的工作量。他们三个可以通过Kubernetes提供的dns来发现服务。

- 无论使用哪种存储系统(例如云磁盘或本地磁盘),Kubernetes都按storageclass,pvc和pv封装基础存储的详细信息。

- Kubernetes可以在几秒钟内部署Nebula Graph集群并自动升级集群,实现用户无感知。

- Kubernetes支持自我修复。

- Kubernetes可以水平扩展集群,来提高Nebula性能。

在下文中,我们将向你展示使用Kubernetes部署Nebula Graph的详细信息。

部署

软件和硬件要求

以下列表是本文中部署所涉及的软件和硬件要求:

- 操作系统是CentOS-7.6.1810 x86_64

- 虚拟机配置:

- 4个CPU

- 8G内存

- 50G系统盘

- 50G数据盘A

- 50G数据盘B

- Kubernetes集群是v1.16版本。

- 使用本地PV作为数据存储。

集群拓扑

以下是群集拓扑:

| 服务器IP | Nebula服务 | 角色 |

|---|---|---|

| 192.168.0.1 | k8s-master | |

| 192.168.0.2 | graphd, metad-0, storaged-0 | k8s-slave |

| 192.168.0.3 | graphd, metad-1, storaged-1 | k8s-slave |

| 192.168.0.4 | graphd, metad-2, storaged-2 | k8s-slave |

要部署的组件

- 安装Helm

- 准备本地磁盘,并安装本地存储卷插件

- 安装 Nebula Graph集群

- 安装入口控制器(ingress-controller)

安装Helm

Helm是Kubernetes软件包管理器,类似于CentOS上的yum或Ubuntu上的apt-get。Helm使得Kubernetes集群部署更加容易。由于本文没有对Helm进行详细介绍,因此请阅读Helm入门指南以了解有关Helm的更多信息。

下载并安装Helm

在终端中使用以下命令安装Helm:

[root@nebula ~]# wget https://get.helm.sh/helm-v3.0.1-linux-amd64.tar.gz [root@nebula ~]# tar -zxvf helm/helm-v3.0.1-linux-amd64.tgz [root@nebula ~]# mv linux-amd64/helm /usr/bin/helm [root@nebula ~]# chmod +x /usr/bin/helm

查看Helm版本

你可以使用命令查看Helm版本,helm version输出如下所示:

version.BuildInfo{ Version:"v3.0.1", GitCommit:"7c22ef9ce89e0ebeb7125ba2ebf7d421f3e82ffa", GitTreeState:"clean", GoVersion:"go1.13.4" }

准备本地磁盘

下面的操作,请在每个节点配置:

创建挂载目录

[root@nebula ~]# sudo mkdir -p /mnt/disks

格式化数据磁盘

[root@nebula ~]# sudo mkfs.ext4 /dev/diskA [root@nebula ~]# sudo mkfs.ext4 /dev/diskB

挂载数据磁盘

[root@nebula ~]# DISKA_UUID=$(blkid -s UUID -o value /dev/diskA) [root@nebula ~]# DISKB_UUID=$(blkid -s UUID -o value /dev/diskB) [root@nebula ~]# sudo mkdir /mnt/disks/$DISKA_UUID [root@nebula ~]# sudo mkdir /mnt/disks/$DISKB_UUID [root@nebula ~]# sudo mount -t ext4 /dev/diskA /mnt/disks/$DISKA_UUID [root@nebula ~]# sudo mount -t ext4 /dev/diskB /mnt/disks/$DISKB_UUID [root@nebula ~]# echo UUID=`sudo blkid -s UUID -o value /dev/diskA` /mnt/disks/$DISKA_UUID ext4 defaults 0 2 | sudo tee -a /etc/fstab [root@nebula ~]# echo UUID=`sudo blkid -s UUID -o value /dev/diskB` /mnt/disks/$DISKB_UUID ext4 defaults 0 2 | sudo tee -a /etc/fstab

部署本地存储卷插件

[root@nebula ~]# curl https://github.com/kubernetes-sigs/sig-storage-local-static-provisioner/archive/v2.3.3.zip [root@nebula ~]# unzip v2.3.3.zip

修改v2.3.3/helm/provisioner/values.yaml文件。

# # Common options. # common: # # Defines whether to generate service account and role bindings. # rbac: true # # Defines the namespace where provisioner runs # namespace: default # # Defines whether to create provisioner namespace # createNamespace: false # # Beta PV.NodeAffinity field is used by default. If running against pre-1.10 # k8s version, the `useAlphaAPI` flag must be enabled in the configMap. # useAlphaAPI: false # # Indicates if PVs should be dependents of the owner Node. # setPVOwnerRef: false # # Provisioner clean volumes in process by default. If set to true, provisioner # will use Jobs to clean. # useJobForCleaning: false # # Provisioner name contains Node.UID by default. If set to true, the provisioner # name will only use Node.Name. # useNodeNameOnly: false # # Resync period in reflectors will be random between minResyncPeriod and # 2*minResyncPeriod. Default: 5m0s. # #minResyncPeriod: 5m0s # # Defines the name of configmap used by Provisioner # configMapName: "local-provisioner-config" # # Enables or disables Pod Security Policy creation and binding # podSecurityPolicy: false # # Configure storage classes. # classes: - name: fast-disks # Defines name of storage classes. # Path on the host where local volumes of this storage class are mounted # under. hostDir: /mnt/fast-disks # Optionally specify mount path of local volumes. By default, we use same # path as hostDir in container. # mountDir: /mnt/fast-disks # The volume mode of created PersistentVolume object. Default to Filesystem # if not specified. volumeMode: Filesystem # Filesystem type to mount. # It applies only when the source path is a block device, # and desire volume mode is Filesystem. # Must be a filesystem type supported by the host operating system. fsType: ext4 blockCleanerCommand: # Do a quick reset of the block device during its cleanup. # - "/scripts/quick_reset.sh" # or use dd to zero out block dev in two iterations by uncommenting these lines # - "/scripts/dd_zero.sh" # - "2" # or run shred utility for 2 iteration.s - "/scripts/shred.sh" - "2" # or blkdiscard utility by uncommenting the line below. # - "/scripts/blkdiscard.sh" # Uncomment to create storage class object with default configuration. # storageClass: true # Uncomment to create storage class object and configure it. # storageClass: # reclaimPolicy: Delete # Available reclaim policies: Delete/Retain, defaults: Delete. # isDefaultClass: true # set as default class # # Configure DaemonSet for provisioner. # daemonset: # # Defines the name of a Provisioner # name: "local-volume-provisioner" # # Defines Provisioner's image name including container registry. # image: quay.io/external_storage/local-volume-provisioner:v2.3.3 # # Defines Image download policy, see kubernetes documentation for available values. # #imagePullPolicy: Always # # Defines a name of the service account which Provisioner will use to communicate with API server. # serviceAccount: local-storage-admin # # Defines a name of the Pod Priority Class to use with the Provisioner DaemonSet # # Note that if you want to make it critical, specify "system-cluster-critical" # or "system-node-critical" and deploy in kube-system namespace. # Ref: https://k8s.io/docs/tasks/administer-cluster/guaranteed-scheduling-critical-addon-pods/#marking-pod-as-critical # #priorityClassName: system-node-critical # If configured, nodeSelector will add a nodeSelector field to the DaemonSet PodSpec. # # NodeSelector constraint for local-volume-provisioner scheduling to nodes. # Ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#nodeselector nodeSelector: {} # # If configured KubeConfigEnv will (optionally) specify the location of kubeconfig file on the node. # kubeConfigEnv: KUBECONFIG # # List of node labels to be copied to the PVs created by the provisioner in a format: # # nodeLabels: # - failure-domain.beta.kubernetes.io/zone # - failure-domain.beta.kubernetes.io/region # # If configured, tolerations will add a toleration field to the DaemonSet PodSpec. # # Node tolerations for local-volume-provisioner scheduling to nodes with taints. # Ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/ tolerations: [] # # If configured, resources will set the requests/limits field to the Daemonset PodSpec. # Ref: https://kubernetes.io/docs/concepts/configuration/manage-compute-resources-container/ resources: {} # # Configure Prometheus monitoring # prometheus: operator: ## Are you using Prometheus Operator? enabled: false serviceMonitor: ## Interval at which Prometheus scrapes the provisioner interval: 10s # Namespace Prometheus is installed in namespace: monitoring ## Defaults to what is used if you follow CoreOS [Prometheus Install Instructions](https://github.com/coreos/prometheus-operator/tree/master/helm#tldr) ## [Prometheus Selector Label](https://github.com/coreos/prometheus-operator/blob/master/helm/prometheus/templates/prometheus.yaml#L65) ## [Kube Prometheus Selector Label](https://github.com/coreos/prometheus-operator/blob/master/helm/kube-prometheus/values.yaml#L298) selector: prometheus: kube-prometheus

修改hostDir: /mnt/fast-disks并# storageClass: true以hostDir: /mnt/disks和storageClass: true分别,然后运行:

# Installing [root@nebula ~]# helm install local-static-provisioner v2.3.3/helm/provisioner # List local-static-provisioner deployment [root@nebula ~]# helm list

部署Nebula Graph集群

下载NebulaHelm图包

# Downloading nebula [root@nebula ~]# wget https://github.com/vesoft-inc/nebula/archive/master.zip # Unzip [root@nebula ~]# unzip master.zip

Kubernetes从节点打标签

以下是Kubernetes节点的列表。我们需要设置工作节点的调度标签。我们可以为192.168.0.2,192.168.0.3,192.168.0.4节点打上nebula: “yes”的标签。

| 服务器IP | kubernetes角色 | 节点名称 |

|---|---|---|

| 192.168.0.1 | master | 192.168.0.1 |

| 192.168.0.2 | worker | 192.168.0.2 |

| 192.168.0.3 | worker | 192.168.0.3 |

| 192.168.0.4 | worker | 192.168.0.4 |

具体操作如下:

[root@nebula ~]# kubectl label node 192.168.0.2 nebula="yes" --overwrite [root@nebula ~]# kubectl label node 192.168.0.3 nebula="yes" --overwrite [root@nebula ~]# kubectl label node 192.168. ### Deploying Ingress-controller on one Node

修改Nebula Helm chart的默认值

以下是Nebula helm-chart 的目录列表:

master/kubernetes/

└── helm

├── Chart.yaml

├── templates

│ ├── configmap.yaml

│ ├── deployment.yaml

│ ├── _helpers.tpl

│ ├── ingress-configmap.yaml\

│ ├── NOTES.txt

│ ├── pdb.yaml

│ ├── service.yaml

│ └── statefulset.yaml

└── values.yaml

2 directories, 10 files

我们需要调整yaml文件中MetadHosts的值master/kubernetes/values.yaml,并将IP列表替换为我们上文k8s worker的IP。

MetadHosts: - 192.168.0.2:44500 - 192.168.0.3:44500 - 192.168.0.4:44500

通过Helm安装Nebula

# Installing [root@nebula ~]# helm install nebula master/kubernetes/helm # Checking [root@nebula ~]# helm status nebula # Checking nebula deployment on the k8s cluster [root@nebula ~]# kubectl get pod | grep nebula nebula-graphd-579d89c958-g2j2c 1/1 Running 0 1m nebula-graphd-579d89c958-p7829 1/1 Running 0 1m nebula-graphd-579d89c958-q74zx 1/1 Running 0 1m nebula-metad-0 1/1 Running 0 1m nebula-metad-1 1/1 Running 0 1m nebula-metad-2 1/1 Running 0 1m nebula-storaged-0 1/1 Running 0 1m nebula-storaged-1 1/1 Running 0 1m nebula-storaged-2 1/1 Running 0 1m

部署入口控制器(ingress-controller)

入口控制器是Kubernetes的附加组件之一。Kubernetes通过入口控制器向用户公开内部部署的服务。入口控制器还提供负载均衡功能,可以将外部访问分配给k8s中应用程序的不同副本。

选择要部署Ingress控制器的节点

[root@nebula ~]# kubectl get node NAME STATUS ROLES AGE VERSION 192.168.0.1 Ready master 82d v1.16.1 192.168.0.2 Ready <none> 82d v1.16.1 192.168.0.3 Ready <none> 82d v1.16.1 192.168.0.4 Ready <none> 82d v1.16.1 [root@nebula ~]# kubectl label node 192.168.0.4 ingress=yes

编辑ingress-nginx.yaml部署文件。

apiVersion: v1

kind: Namespace

metadata:

name: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: nginx-configuration

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: tcp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: udp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: nginx-ingress-clusterrole

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses/status

verbs:

- update

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: Role

metadata:

name: nginx-ingress-role

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

resourceNames:

# Defaults to "<election-id>-<ingress-class>"

# Here: "<ingress-controller-leader>-<nginx>"

# This has to be adapted if you change either parameter

# when launching the nginx-ingress-controller.

- "ingress-controller-leader-nginx"

verbs:

- get

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- endpoints

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: RoleBinding

metadata:

name: nginx-ingress-role-nisa-binding

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: nginx-ingress-role

subjects:

- kind: ServiceAccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: nginx-ingress-clusterrole-nisa-binding

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: nginx-ingress-clusterrole

subjects:

- kind: ServiceAccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

spec:

hostNetwork: true

tolerations:

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app.kubernetes.io/name

operator: In

values:

- ingress-nginx

topologyKey: "ingress-nginx.kubernetes.io/master"

nodeSelector:

ingress: "yes"

serviceAccountName: nginx-ingress-serviceaccount

containers:

- name: nginx-ingress-controller

image: quay.io/kubernetes-ingress-controller/nginx-ingress-controller-amd64:0.26.1

args:

- /nginx-ingress-controller

- --configmap=$(POD_NAMESPACE)/nginx-configuration

- --tcp-services-configmap=default/graphd-services

- --udp-services-configmap=$(POD_NAMESPACE)/udp-services

- --publish-service=$(POD_NAMESPACE)/ingress-nginx

- --annotations-prefix=nginx.ingress.kubernetes.io

- --http-port=8000

securityContext:

allowPrivilegeEscalation: true

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

# www-data -> 33

runAsUser: 33

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

ports:

- name: http

containerPort: 80

- name: https

containerPort: 443

livenessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

部署ingress-nginx。

# Deployment [root@nebula ~]# kubectl create -f ingress-nginx.yaml # View deployment [root@nebula ~]# kubectl get pod -n ingress-nginx NAME READY STATUS RESTARTS AGE nginx-ingress-controller-mmms7 1/1 Running 2 1m

在Kubernetes中访问Nebula Graph集群

查看ingress-nginx位于哪个节点:

[root@nebula ~]# kubectl get node -l ingress=yes -owide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME nebula.node23 Ready <none> 1d v1.16.1 192.168.8.23 <none> CentOS Linux 7 (Core) 7.6.1810.el7.x86_64 docker://19.3.3

访问 Nebula Graph 集群:

[root@nebula ~]# docker run --rm -ti --net=host vesoft/nebula-console:nightly --addr=192.168.8.23 --port=3699

常问问题

- 如何部署Kubernetes集群

请参考–高可用Kubernetes集群部署的官方文档。

你也可以参考使用Minikube安装Kubernetes来部署本地Kubernetes集群。

- 如何修改Nebula Graph集群参数?

使用helm install时,可以使用–set 覆盖中的默认变量values.yaml。有关详细信息,请参阅Helm。

- 如何观察Nebula 集群状态?

你可以使用kubectl get pod | grep nebula命令或通过kubernetes仪表板。

- 如何使用其他磁盘类型?

请参考存储文档。

译文链接:https://dzone.com/articles/how-to-deploy-nebula-graph-on-kubernetes-a-step-by

Kubernetes中文社区

Kubernetes中文社区

登录后评论

立即登录 注册